Home

/ Blog /

Optimizing Video Call Quality: Lessons from 100ms' Dogfooding and TestingOptimizing Video Call Quality: Lessons from 100ms' Dogfooding and Testing

April 3, 20245 min read

Share

WebRTC powers most live video conferencing tools you see - Google Meet, Teams, your telehealth provider’s app, and the live spaces in your favorite social media app. WebRTC has allowed video conferencing to move from closed expensive systems to browsers and devices everywhere. But for developers building with WebRTC, debugging calls issues like “Can’t hear you”, “You broke up there for a while” isn’t as straightforward. This is especially true during rollout.

Developers often go live with settings that might not be optimized for their use case. End-users don’t always have the same (often great) network conditions/devices used during development. And simulating poor network conditions for WebRTC isn’t a part of most front-end developers’ toolkit. E.g. a telehealth app’s end-users can sometimes have;

- Patchy WiFi setups (routers placed far away from rooms), with above-average packet drops.

- Older Safari versions can have bugs that cause echo during video calls.

These are specific issues but they can extend to other devices and use cases. This needs testing outside of office WiFis, simulated with UDP packet losses, and older iPhones.

This is part 1 of our series on how 100ms’ enterprise support adds dogfooding and early check-ins to help developers go live with minimal errors.

At 100ms, we bridge this gap with our premium and enterprise support packages by offering dogfooding sessions. This involves 100ms’ customer success team running tests on the customer’s app before rollout.

As part of this, 100ms’ customer success manager understands the end-users’ setup and designs test cases. These cases are run on the customer’s beta app on 100ms’ device farm and run through our setup to simulate stressful network conditions - higher RTT (Round trip time), packet losses, or throttled upload & download bitrates. During these tests, 100ms runs through multiple settings, and then recommends the optimum settings to the customer.

This testing often saves the customer 4-6 weeks of iteration with real users to get the same settings.

This blog outlines the impact of these dogfooding sessions with a real-world case study.

Case study

Let’s first understand quickly what parameters a developer can set when integrating a WebRTC application.

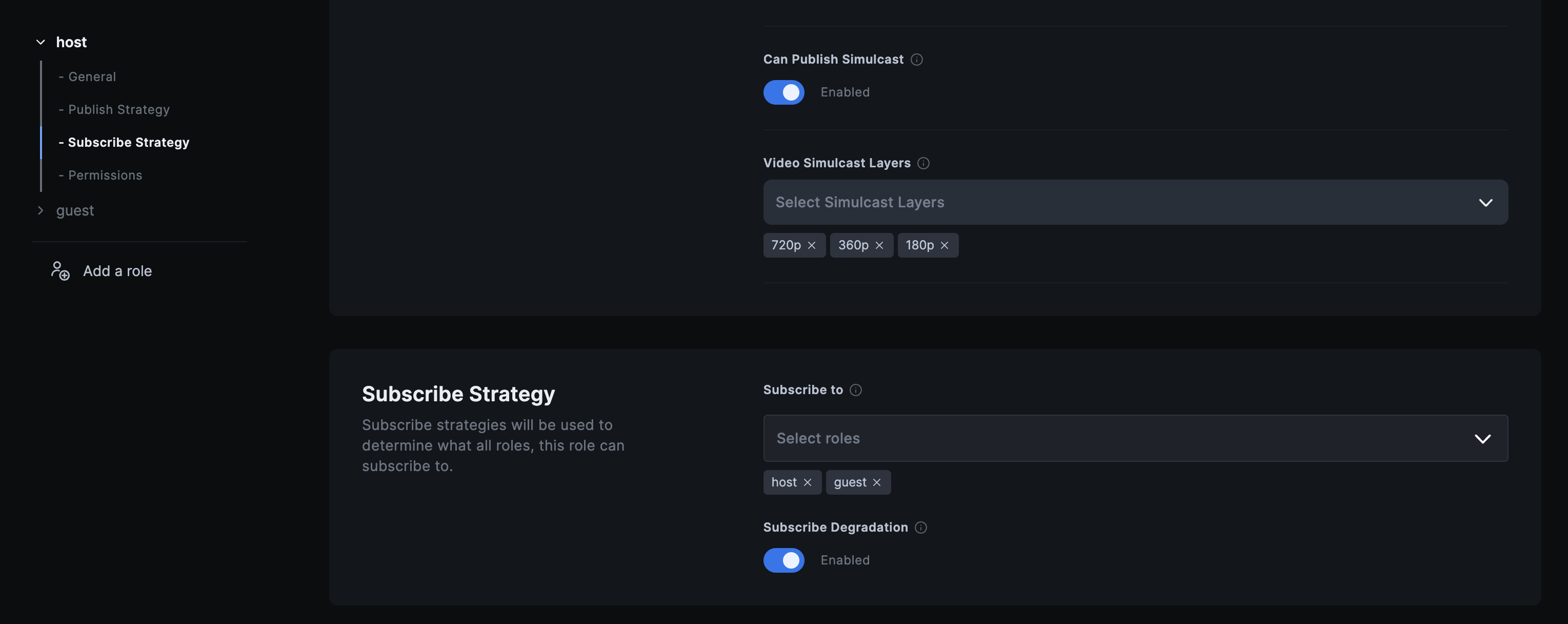

Developers have the following 100ms settings to play with (and for a few other WebRTC APIs)

-

Highest publish layer settings - The ideal resolution, fps, and bitrate at which each user will publish their videos. E.g. 720p, 30 fps, 850 kbps.

-

Simulcast settings - The publishing user can publish up to 3 layers of videos (540p/30fps/480kbps, 360p/30fps/320kbps) with subscribers moving to lower layers in poor network/device conditions

-

Subscribe degradation - This is a special case of fallback implemented by 100ms, where the subscribing user subscribes only to the audio, in case there’s no bitrate available for the video to be subscribed to, even at the lowest simulcast layer.

In this case, the customer is a dating app where users can have 1-on-1 audio or video calls. Most customers are in urban US/EU regions.

The developers chose the following settings for their app

-

Highest publish layer settings : 720p, 30fps at 900 Kbps

-

Simulcast settings :

| Resolution | Frame Rate | Max Bitrate | |

|---|---|---|---|

| Layer 1 (Highest publish layer setting) | 720p | 30 fps | 900 kbps |

| Layer 2 | 360p | 30 fps | 500 kbps |

| Layer 3 | 180p | 30 fps | 200 kbps |

- Subscribe Degradation : Enabled

These are standard settings, and what we often see developers go live with. After getting access to the beta build, 100ms’ customer success team worked with the developers to design test cases for their scenarios.

First dogfooding session results

While dogfooding the app, 100ms’ customer success team identified a problem where the video resolution kept dropping frequently and the video would often get pixelated. This happened specifically in slightly older Android/iOS phones. These phones represented a double-digit percentage of the app’s user’s devices.

Debugging

To help isolate these issues, we validate the dogfooding test results with data - WebRTC stats. These stats keep track of video resolution, bitrate, frame rate, RTT (Round Trip Time), etc. that every user is publishing at any point in time.

Looking at the publish resolution data from publisher stats from the problematic sessions, it was clear that the user was only able to publish the 2nd simulcast layer of 360p but not the primary 720p layer. All other output results seemed fine.

There could be one of 2 factors contributing to the lower resolution - internet connectivity or device CPU limitations. In this case, the average_outgoing_bitrate which is the best estimate of network being of poor health. When we looked at publisher_quality_limitation which is one of the publisher stats, it was clear that the device was unable to publish the 720p video because of CPU limitations. This confirmed the eye-test that this was not because of network conditions, but due to these older phones not being able to consistently publish video at the highest publish layer.

Solving the Problem

It's surprising how many video conferencing tools (including Zoom, Google Meet) will often default to slightly lower framerate, without users noticing the difference in 1-in-1 calls. 720p with 30fps turns out to be too much for even slightly older devices. This causes devices to default to the next layer - 360p, which is visibly worse.

We wanted to reduce the CPU load on these low-end devices but still retain the quality. We ran the next dogfooding session by changing the publish layer settings to the following:

| Publish resolutions | Frame rate | Max Bitrate | |

|---|---|---|---|

| Layer 1 | 540p | 15 fps | 1100 kbps |

| Layer 2 | 480p | 30 fps | 1000 kbps |

| Layer 3 | 480p | 15 fps | 800 kbps |

We reduce CPU load by 2 ways here.

- Reducing the highest layer from 720p to 540p

- Keeping the last 2 layers at the same resolution. For both these layers, the publisher effectively needs to publish just once - at 480p/30fps. Switching from layer 2 to layer 3 can happen between the server (SFU) and the subscriber.

Second dogfooding session results

Here’s a before and after comparison of the video quality after changing the settings.

With the same device and network conditions, these settings look much better in the older phones we saw the issue in. And for newer devices, this

- A higher frame rate (fps), such as 30 fps, does not always result in better video quality, especially on mobile devices. A lower fps rate, like 15 fps, can perform better without a noticeable visual difference.

- 540p at 1100 kbps yields excellent quality with sharp video, functioning well across various high-end and low-end devices.

- Reducing simulcast layers to two can further reduce the load on the device, improving video quality.

Conclusion

Understanding the end users’ device, location, and internet quality demographics is crucial to tailoring an optimal experience. There are no one-size-fits-all template settings that will solve for all use cases. We’ve thoroughly tested across a range of devices, a variety of internet conditions, and browsers, and have also set up periodic automated tests for the same purpose. This comprehensive testing gives us the advantage when we’re testing our customers’ setup. Our experience not only lessens your overall testing load but also allows you to arrive at the optimal settings faster.

Because issues often affect a minority of users, it is easy to go live and not fix these issues immediately. Dogfooding apps in networks/devices close to the end-users' settings save several weeks of poor user experience and ratings.

Video

Related articles

See all articles