Home

/ Blog /

What is Live Streaming? | All you need to knowWhat is Live Streaming? | All you need to know

September 10, 20228 min read

Share

In 2020, you might’ve caught Dua Lipa’s STUDIO 2054 virtual concert - the one that drew 5 million viewers. Or were you one of the 8.4 million livestream viewers (and Fortnite fans) who tuned in to watch Epic Games' in-game event "The Device" in 2020?

Perhaps you bought yourself something from a live shopping event. These events generated almost US $5.6 billion sales in 2020 so it’s not a stretch to imagine you or someone you know shopping off one.

Guess what’s common about the three wildly different events we just mentioned? They’re all livestreams.

Gone are the days where live streams were largely about individual influencers telling you what they had for lunch. It’s value for businesses operating in different industries is now obvious and real-world approved.

But anecdotes aside, global consumption of live streams seems to be on the rise:

- 80% of audiences prefer watching a live video (livestreams) to reading a blog, based on a survey of 1000 adults spanning different ages and professions. Source.

- 63% of people aged 18-34 are watching live streamed content regularly. Source.

- 67% of audiences who watched a live stream purchased a ticket to a similar event the next time it occurred. Source.

- Live content gets 27% more view minutes per view compared to video on demand (just under 18 minutes). Source.

Organizations are finally starting to catch up on the efficacy of livestreams when it comes to generating brand and product awareness as well as revenue. Chances are, if you’re reading this article, your organization has realized the benefits of implementing live streaming into your customer-facing apps & websites.

However, if you are still asking yourself “What is Live streaming?”, this article is a good place to start. It’s your 101 guide to what live streaming is, how it works as well as a quick view of the technical elements live streaming requires.

What is Live streaming?

Chances are, you’ve already watched at least one (or more realistically, tens or hundreds) live stream by now. Essentially, live streams involve sending video and audio over the internet in near real-time.

Think of live streams as watching live news or sports. Now extend that thought to include concerts, video games, fashion shows, travel experiences broadcast in real time….

In live streams, video is captured, processed, and delivered to viewers almost simultaneously.

All viewers need to watch a live stream is an internet-enabled device and access to the streaming platform. But what actually happens between when the moment is “captured” and the moment when it is actually consumed by you?

How does Live streaming work?

Note: The workflow described below is largely how livestreaming works when implemented via HLS - the most popular (almost default) protocol for video-delivery over the internet. However, there are other livestreaming protocols that may require a different set of steps to push livestreams to viewers.

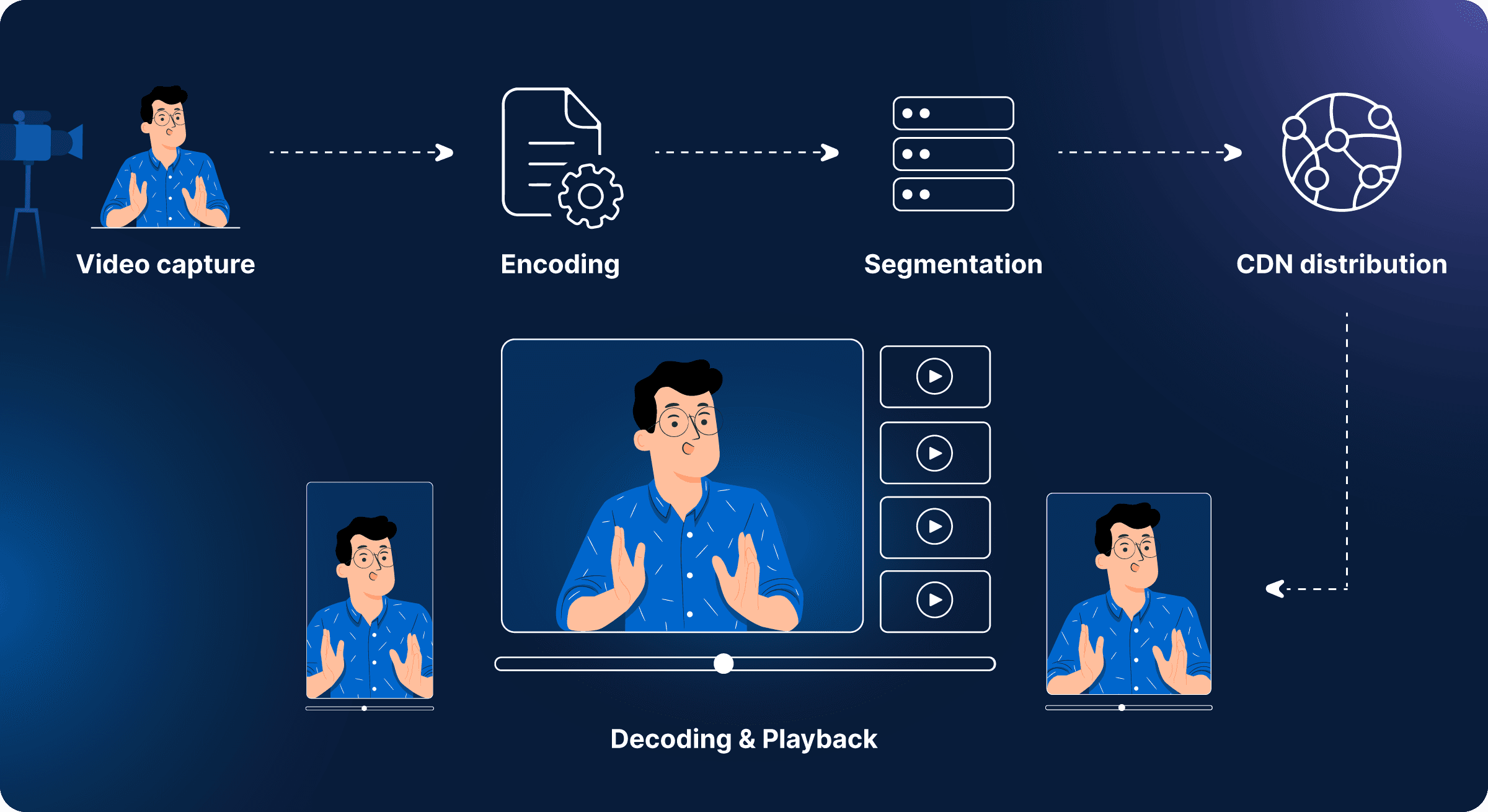

In order for video to be streamed live, it goes through the following stages:

- Video capture

- Encoding

- Segmentation

- Content delivery network (CDN) distribution

- Decoding & Playback

Video Capture

First, video is captured by a digital camera. Generally, such cameras capture video at about 4k resolution, which is too high to be transported to viewer devices in its raw form.

Note that all cameras perform some compression automatically on captured images, in order to store them efficiently. However, even after compressing them, the files are too large to be transferred over most internet connections. This is especially true for livestreams catering to large audiences logging in from different locations and different network connectivity levels.

Encoding

The raw video captured by the camera cannot be directly transmitted to viewers. Video must be compressed - converted from heavy, raw files to smaller, compressed files.

Think of your video as a collection of still images, shown one after the other, at a certain pace that is fast enough to create the illusion of movement to the human eye. Encoding packs this sequence of still images efficiently, by eliminating repetition and utilizing the commonalities between subsequent frames.

For example, let’s say that the first frame of the video depicts a dancer twirling against a blue wall. If the background does not change for the next few frames, the background does not have to be separately rendered for those subsequent frames. Encoding can potentially remove the data for the background for all frames except the first one - thus reducing the data required to depict that background.

Encoders use ‘codecs’ (short for coder-decoder) to compress video files by removing redundant frames. This converts massive RAW files into reasonably-sized units that are easier to stream over most internet connections.

Note: Codecs are protocols or processes that define how video files will be stored post encoding. The example above of the dancer and the blue wall depicts what codecs do - reduce file size by elimination unnecessary frames while retaining quality as far as possible. Different codecs have different ways of accomplishing.

It is possible to convert video files from one codec to another during the livestreaming process. However, that is beyond the scope of this article, and will be explored in detail in an upcoming piece.

Segmentation

After encoding, video files are directed to a media server. Even though the video files are now more streamable, they still need to be further processed for streaming to users with different devices and internet speeds. For this purpose, the server segments the video and converts these segments into multiple renditions or bitrates.

To start with, the video files are broken down into chunks or segments. The segment length can be decided beforehand to suit your use-case. For example, you can split a one-hour long video into 360 10-second segments.

Next, each of these segments are converted to different renditions or bitrates. For example, in this case, the 360 segments are converted into:

- 360 segments at 1080p

- 360 segments at 720p

- 360 segments at 360p

Segmentation is required so that users can watch livestreams without interruptions, no matter the strength of their network connection. Modern video players (most of which are HTML5 players) can actually choose and pick which video segments to play, depending on the bandwidth available. It can also automatically switch between playing video segments at different bitrates mid-stream, if the internet strength improves or degrades.

Segmentation is required to achieve something called adaptive bitrate streaming (ABR) - a phenomenon at the heart of modern, democratized livestreams. Keep scrolling, and you’ll find ABR is explained in detail later in this article.

CDN distribution and caching

Any livestream catering to even hundreds of users requires the video server to store, queue, and process formidable volumes of data. Moreover, with users joining from multiple devices, a single video server responding to requests is bound to experience load-based stress and even crash. To prevent this from happening, livestreaming stacks use Content Deliver Networks (CDNs).

A CDN is a network of interconnected servers placed across the world. The main criteria for distributing content (video chunks in this context) via CDNs is how physically close the server is to the end-user. Here’s how it works:

Once your viewer clicks the play button, their video players trigger a request for the livestream content. The request is routed to the closest CDN server. In case this is the first time this content is being requested, the server will reroute the request to the original server where the video segments are processes and stored. In response, the origin server sends the video to the CDN server.

The CDN server will forward the video segments to the user. But it will also cache a copy of it locally. When requests for the same content come through again, the CDN does not have to trigger another request to the origin server. It can just forward the cached files.

The whole point of CDNs is that they are scattered across the world. This helps with reducing latency in livestreams (or any video content) because viewer requests do not have to travel inordinately long distance every time someone wants to watch online content.

Decoding and playback

All video data reaches the end-user’s player in encoded format, as discussed above. The player decodes and decompresses the video segments in order to play them. This player could be a dedicated app or function within a browser.

In order to decode the encoded and compressed files, the video player must have a relevant codec already installed. At the very least, it should have the requisite codec installed.

Adaptive Bitrate Streaming (ABR)

Adaptive bitrate streaming is a technology that ensures that viewers of online video content get the best possible video quality, as allowed by their bandwidth availability. It is designed to provide viewers with uninterrupted video streams, irrespective of changes in a user’s internet speed.

As explained above, during segmentation, video chunks are processed into different bitrates. Depending on the end-users’ internet strength, the video player chooses the rendition that would be best assured to provide swift buffering and seamless playback.

For example, let’s say you’re watching a livestream via your home wi-fi connection. Chances are, you connection is high-speed, which means that you’ll probably get video segment at 720p or 1080p. However, if you decide to watch the stream at a cafe while waiting for your coffee order, you might hit a spot of bad connection, which is when your player will select and deliver video segments at 360p or lower. That way, the video never pauses and you never miss out on the content; you just might have to watch it at a lower quality.

Interactivity for Greater Audience Engagement

Livestreams allows the opportunity for real-time interaction with audiences. Most live streams at least allow for a chat function, through which users can leave comments and ask questions.

However, livestreams are now moving beyond basic text-based interactivity. Audience now expect to be seen and heard interacting with the streamers. For example, it is common for celebrities on Instagram to host live videos in which they select members from the audience to talk to, while the rest of the viewers watch.

Also, since livestreams too can now be recorded, they can be reupload as on-demand content for viewers who may not have caught the stream in real-time. Essentially, live streams are now able to target multiple viewer segments.

Bear in mind that users also do not simply want to sit and be spoken to. They want to engage, highlight what matters to them, ask questions, have their opinions heard. Livestreams must support this expectation via features like polls, chat, video interaction & more - all of which are supported with 100ms.

The use-cases for interactive livestreams are also expanding into industries you wouldn’t imagine would align with this technology. For example, live shopping allows sellers to showcase their products online, and lets customers buy said products in real-time. This phenomenon has exploded in China, to the extent that it is being hailed as the future of shopping.

Build & Run Interactive Livestreams with a single SDK

If you’re planning on adding livestreams to your video content strategy, it's recommended that you build with interactivity in mind. 100ms enables exactly this by offering a single SDK which combines streaming with conferencing. Our Interactive Live Streaming SDK enables customers to build more engaging live streams with multiple broadcasters, interactivity between broadcaster and viewer, and the ability to stream from mobile easily.

At a high level, the 100ms SDK lets you build two-way interactive live streams into your product with minimal effort. Broadcasters can go live from any browser, and invite audience members to the stage in order to communicate with them directly, on video. We’ve also built primitives which allows for building varied interactions (chat, polls, call metadata) on top of our SDK.

Additionally, you get to customize your screen layout, and launch streams directly from apps or desktop browsers without having to use professional stream encoding software of any kind.

If you’re not convinced, just click on the link above and try out the SDK for free.

Basics

Share

Related articles

See all articles