Home

/ Blog /

A New Approach to Live StreamingA New Approach to Live Streaming

July 27, 20224 min read

Share

The long arc of tech progress has shown that user behavior and technology evolve together: changes in user behavior inspire technology, and then technology drives more of that behavior. The world of live streaming is going through one such change, where new user behavior is driving us to reevaluate the live streaming tech stack.

Since the first live stream in 1995 when the Yankees were playing the Mariners, live streaming has now become an important medium for users on the Internet to learn, play, shop, and work. Who gets to stream and how they interact with their audience is changing rapidly, and this change is informing our approach to building infrastructure for live streaming.

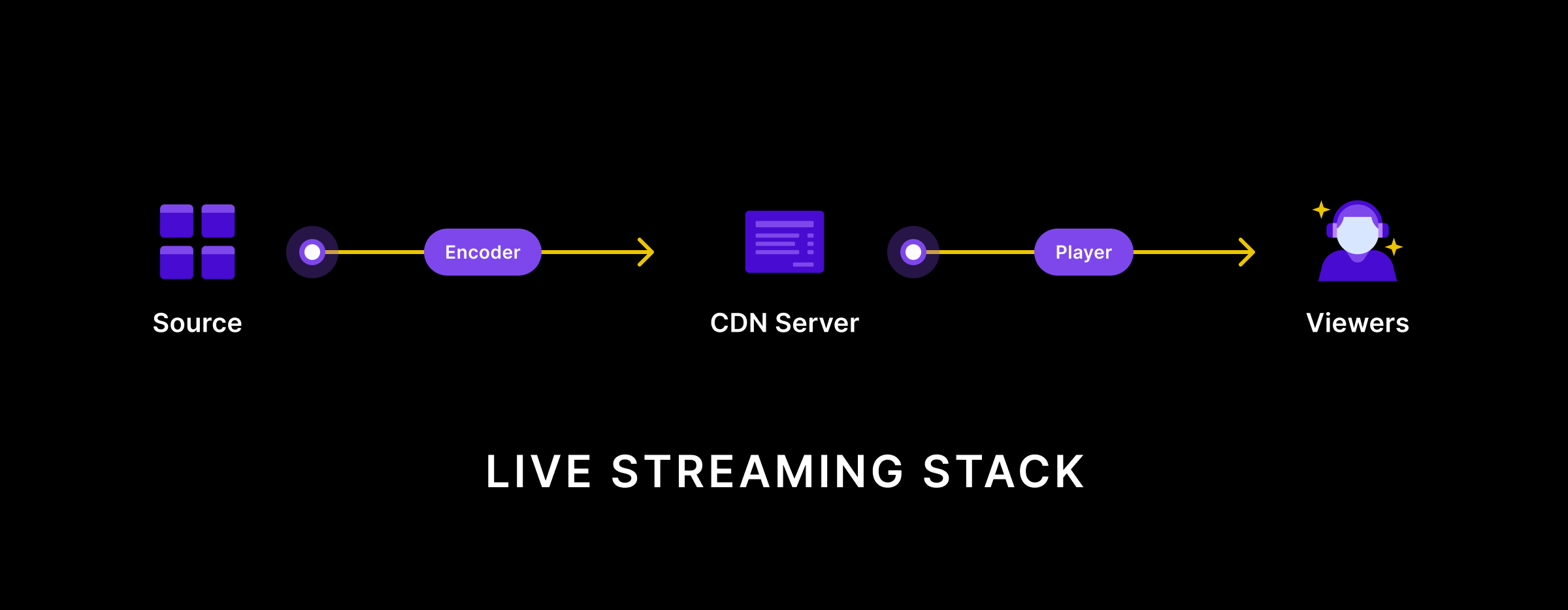

The present-day live streaming tech stack

Most live streaming apps today are built by combining RTMP encoded media streams at the streamer’s end and HLS streams at the viewer’s end. An industry of media servers in the middle exists to transcode the input format into the output stream.

RTMP is a mature protocol that was originally built to support Adobe Flash. Given its maturity, RTMP is widely supported by encoding software and hardware, which can ingest raw device streams and output RTMP streams. RTMP is also fast: it optimizes for reduced latency.

RTMP used to work well on the viewer’s end too, given that it was the preferred streaming protocol for Adobe Flash. However, as Flash usage went down and HTML5 emerged, HLS became a better fit for viewers. HLS is built over HTTP and is widely supported across all mobile and desktop devices.

The combination of RTMP and HLS has worked well given the asymmetry in live streaming personas: there are many more viewers who require frictionless viewing and there are only a few streamers who need to configure specialized encoding software (like OBS).

So what’s changing?

User behavior around live streaming is changing in 3 big ways: democratization, interactivity, and creator collaboration.

Democratization

Live streaming has been democratized and is no longer limited to professional streamers using sophisticated equipment connected to reliable broadband. Everyone is now streaming live with Instagram and YouTube, and from mobile devices connected to unreliable networks.

Interactivity

Live streams are no longer one-way broadcasts. Streamers and viewers are looking for ways to engage and interact with each other. Chat and emoji reactions running alongside live streams are now table stakes.

More recently, we have come across scenarios where viewers get “promoted” into becoming streamers. This enables new stream formats and increases the engagement between streamers and viewers.

Creator collaboration

Streamers are also experimenting with newer formats that involve collaborating with other streamers. As Ping Labs puts it, video calls have now become video content.

The pandemic has accelerated these changes. Live streaming creation and viewership shot upward, and that motivated more streams, more interactivity and more experimentation with stream formats.

What will happen to the live streaming tech?

Live streaming works well on the viewer’s end. HLS, and similar protocols like MPEG-DASH, have democratized viewership by building on top of HTTP. Anyone with a web browser or a smartphone can view live streams today.

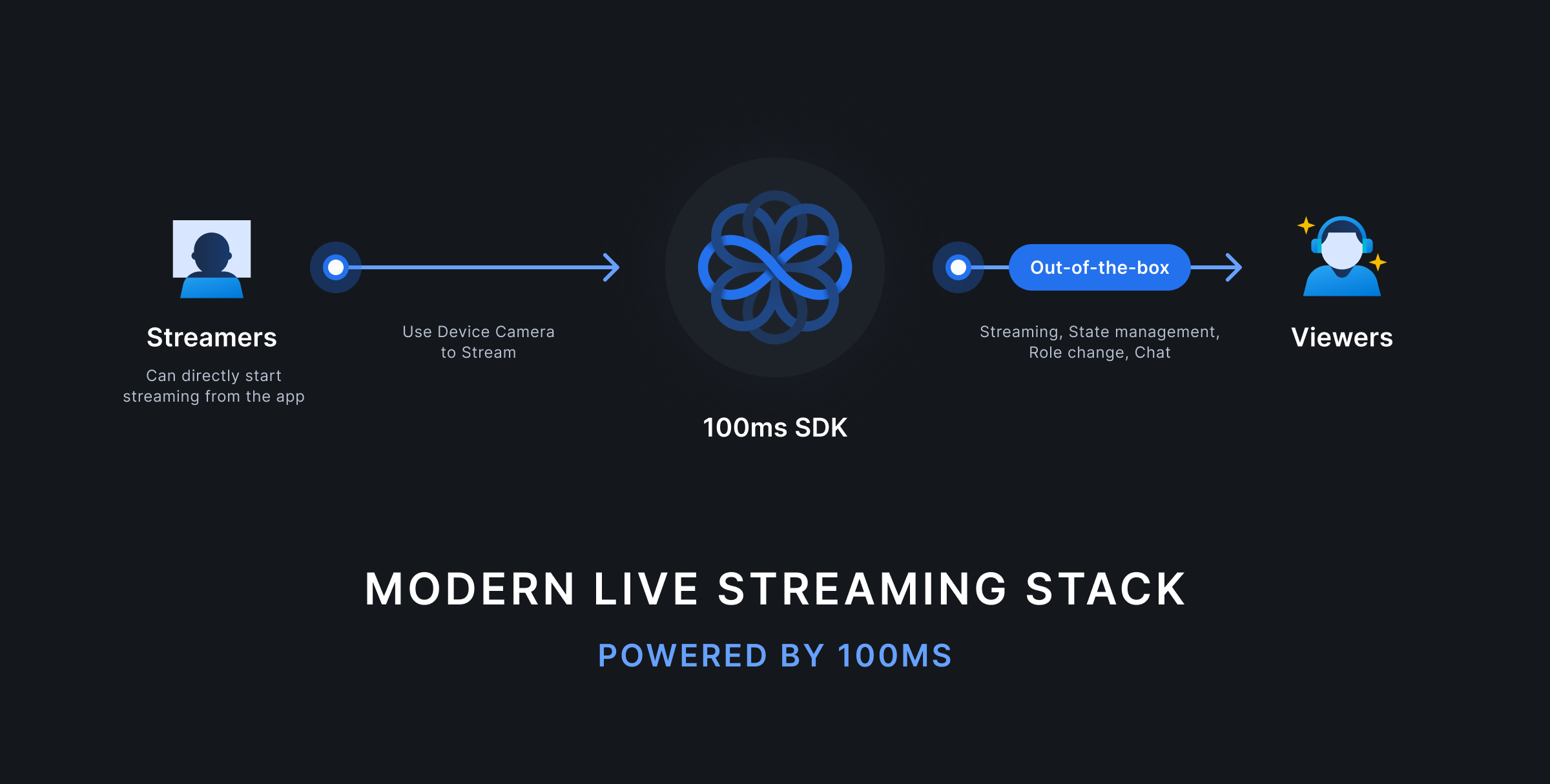

It's time something similar happens on the streamer’s end. The solution to democratization, interactivity and creator collaboration will be found in WebRTC becoming an alternative to RTMP in the live streaming tech stack.

Given the times it was designed, RTMP is unsuitable for streaming from mobile devices. It is built over TCP and assumes a fixed encoding bitrate. When the device runs into network disruptions, an RTMP encoder keeps producing output, which further chokes the network. WebRTC is a more modern protocol built over UDP and can adjust encoding bitrates based on network feedback.

WebRTC is also more widely available. Any modern web browser today can encode WebRTC streams without requiring any additional software. Native apps on iOS and Android also support WebRTC well.

WebRTC is also built for interactivity, given that it was initially a solution for real-time video conferencing. Chat and other forms of interactivity are easy to achieve on top of WebRTC. It is also possible to invite HLS viewers as WebRTC participants, which makes it suitable for advanced interactivity scenarios, where the viewer is promoted into becoming a streamer.

Given its roots in conferencing, WebRTC also supports creator collaboration out of the box. Streamers can join in from different device platforms given that WebRTC is everywhere.

The worlds of live video will merge

Given the evolution in user behavior, it is time these worlds began to merge. Streaming use cases will leverage conferencing tech to introduce interactivity and other benefits. Conferencing will leverage streaming tech to scale video calls to many viewers in near real-time.

Get access

We are a team that has built live video products in companies like Disney+ and Facebook and are now applying that expertise to enable thousands of developers to build live video apps.

We are excited by the creativity of our customers who are imagining new use cases of live video every day. Developers and product managers are building experiences that mix the worlds of conferencing and streaming, and we are building infrastructure to enable them to do more with less.

If this evolution in live video excites you and is relevant to your needs, try live streaming with 100ms. Sign up to get started and join our Discord community to connect with us. We look forward to seeing what you build.

Engineering

Share

Related articles

See all articles