Home

/ Blog /

RTMP vs WebRTC vs HLS : Live Video Streaming Protocols ComparedRTMP vs WebRTC vs HLS : Live Video Streaming Protocols Compared

March 11, 20226 min read

Share

Live Video Streaming has successfully made the jump from a novel, ground-breaking and elite technology to a ubiquitous mode of communication and data consumption in our everyday life - be it Social Media, Entertainment, Sports, Education - the list goes on.

In the post-pandemic era, the demand for video has amplified as home-bound individuals produce and consume hundreds of hours of video for work meetings, family reunions, hanging out with friends, online education, and everything in between. Zoom’s average number of users jumped from 10 million in December 2019 to 300 million in April 2020. Cisco’s Webex doubled its users to 324 million between Jan-March 2020.

In this gold rush for live video, the integration of video streaming in all types of applications has become essential. Given the explosion in demand, developers, managers, and organizational stakeholders are investing more time, effort, and money in understanding video than ever before.

As always, the best place to start is the basics. This piece will explore the fundamentals of how video travels through the internet highway via video streaming protocols. It will also offer insight into deciding which streaming protocol might be the right fit for the next video application you want to build.

But, let’s answer the most obvious question.

What are video streaming protocols?

Video streaming protocols spell out the rules for sending and receiving multimedia, segmenting the stream into multiple chunks, and enabling playback in the right sequence from one device to another across the Internet.

Each protocol has its own pros and cons and it’s important to consider which would best fit into your business requirements and meet customer expectations.

To start with, let’s have a look at the most frequently used video protocols, the three titans of the video streaming “empire”, so to speak - RTMP vs WebRTC vs HLS.

RTMP (Real-Time Messaging Protocol): The Dusk

RTMP or Real-Time Messaging Protocol was developed by Adobe to enable high-performance live streaming of audio, video, and data between a dedicated RTMP streaming media server and Adobe Flash Player.

The primary intent of this protocol was to achieve low latency and reliable communication by maintaining a persistent connection between the streaming server and the video player. In layman’s terms, it can deliver a reliable video stream from the source to the viewer in under 5 seconds of delay on average.

With the above features, It's no wonder that RTMP was the de facto protocol for live video until early 2010. Its marriage to Flash Player gave it a huge reach and compatibility with most browsers of the time.

Cons of RTMP

As more modern clients like mobile and IoT emerged in the last decade, RTMP started losing its ground due to its inability to support native playback in these platforms. The Flash Player was also taking a hit from the new kid on the block - HTML5. With the slow decline of Flash support across all clients, the sun has almost set on RTMP delivery channels.

Yet RTMP continues to live on as a popular channel for the first-mile delivery, known as RTMP Ingest which transports media from the encoder to media streaming servers. All top media streaming platforms such as Youtube, Twitch, Facebook, etc. utilize RTMP Ingest to live stream video from publishers.

HLS (HTTP Live Streaming): The Day

HLS or HTTP Live Streaming Protocol was launched by Apple in 2009 as an open specification for delivering media over HTTP which is then playable with HTML5 players. Following the rise of HTML5, HLS took over the reins of video streaming by offering robust, reliable media distribution that can scale to hundreds of millions of users with the support of CDNs.

Today, HLS is widely used by all browsers, mobile, and LR platforms to facilitate video consumption and streaming. A key advantage of HLS is its use of Adaptive Bitrate Streaming (ABR) which greatly enhances viewer experience and stream quality.

ABR allows for multiple renditions of the same video to be sent to each client. Depending on the network conditions, the client can navigate through available streams to generate a smooth playback experience with better quality and minimal buffering. In other words, they can select video quality based on their network strength.

HLS also supports DRM encryption (Digital Rights Management), a high security mechanism to protect the content it is streaming. This makes it the ideal choice for last-mile content delivery by all streaming and OTT providers.

Cons of HLS

For live video streaming, the main drawback of HLS is latency. As opposed to RTMP, the video is not transported as one continuous stream from the server to the client. It is broken up into smaller segments of a certain duration and sent over HTTP. Clients can start playback only after receiving a full segment.

Taking into account the time for ingesting the source, generating multiple renditions, distribution over CDNs, and final playback buffer requirements, latency can easily peak at 20-30 seconds in HLS. This is an absolute no-go for many applications involving peer-to-peer interactions, conferencing, etc.

Though Apple came up with an HLS extension for low-latency (LL-HLS) in 2019, it’s still in the early stages of conception and usage.

WebRTC (Web Real-Time Communication): The Dawn

WebRTC or Web Real-Time Communication is a free and open-source technology for enabling real-time video, audio, and data communication without any plugins. Simply put, it allows real-time video and audio communication to work inside web pages.

In 2010, Google acquired a company that developed many components required for RTC, open-sourced the technology, and engaged with relevant standards bodies at the IETF and W3C to ensure industry consensus. After a year, Google released an open-source project for browser-based real-time communication - called WebRTC.

Over the next few years, WebRTC gained the support of major players like Apple, Mozilla, Microsoft, Google, Opera, and more. Many modern browsers have extended native support for WebRTC. The adoption of WebRTC has been steadily increasing to mobile platforms and even in the IoT space.

WebRTC works on a long stack of protocols to abstract the media engine, codec, and transport layer into a bunch of APIs. The web developer just has to use these APIs to capture the media from a webcam, set up a peer connection, and transmit the data directly between any two browsers across the world with less than 500 milliseconds of latency.

ABR is also supported to a limited extent. WebRTC simulates where multiple video renditions are generated and selectively forwards only a single rendition to each client based on their network conditions.

Cons of WebRTC

The challenge, however, lies in scaling. Due to the intense bandwidth configuration required to support multiple peer connections, WebRTC natively does not scale well beyond a few thousand connections at most.

Yet the promise of the standard is kept alive as customized WebRTC-CDNs are slowly coming up with innovative modifications to offer sub-second video delivery to over a million users.

RTMP vs HLS vs WEBRTC

| RTMP | HLS | WebRTC | |

|---|---|---|---|

| Latency | 2-5 seconds | 20-30 seconds | <500 miliseconds |

| Scale | Limited due to persistent server-client connections. Needs special RTMP proxy to scale | Millions of viewers | ≤10000 viewers |

| Quality | No ABR. Quality depends on the bandwidth availability of the clients | ABR enables excellent network adaptability and superior quality | With simulcast, quality can adapt to network conditions |

| Reach | Is nonexistent in the last mile delivery due to the decline and eventual death of Flash | Compatible with all HTML5 players. Supported by all client platforms | Currently supported by most modern browsers, iOS, and the Android ecosystem |

Build Live Video with 100ms

At the end of the day, there is no single winner among the video protocols that can solve all challenges of enabling live video at present. For most applications, a combination of the three aforementioned protocols works best by neatly bridging gaps in the entire lifecycle of video transmission - from ingest to delivery.

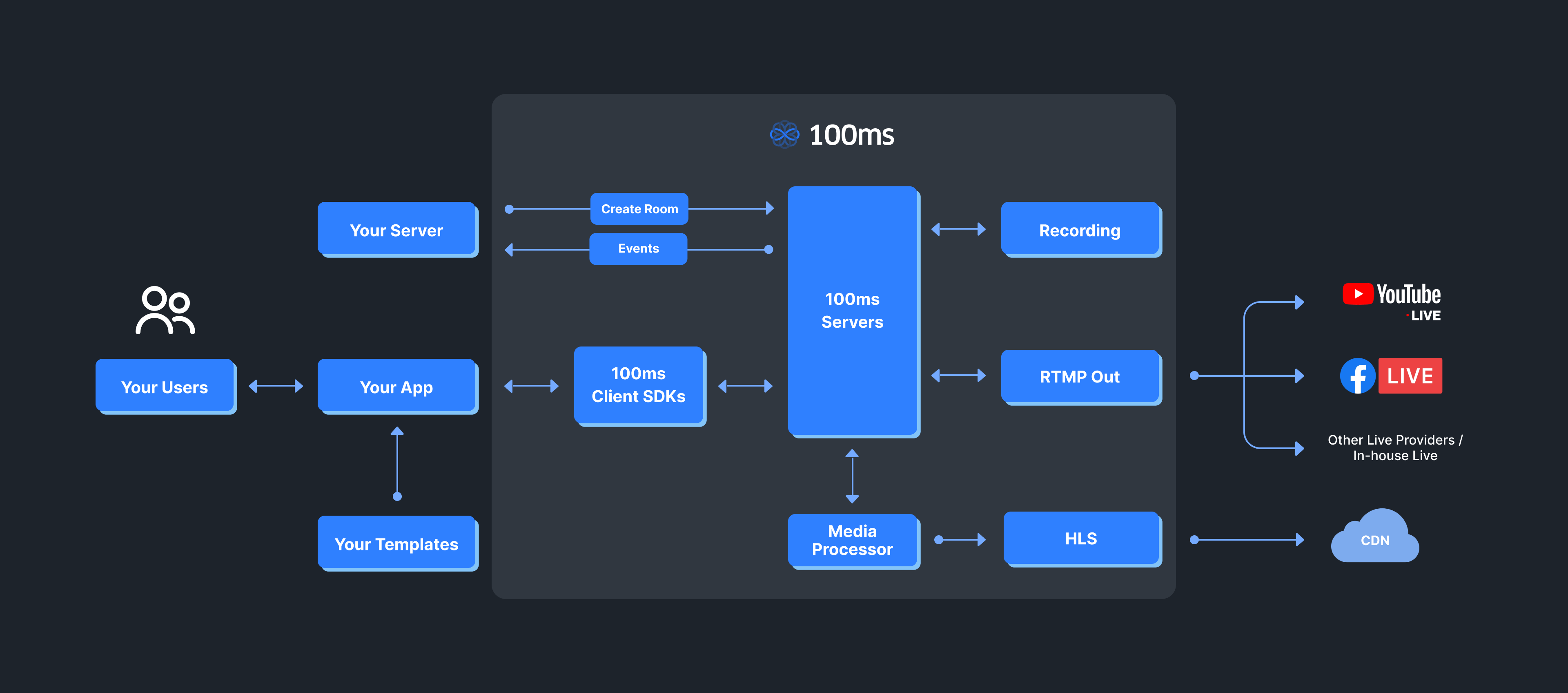

At 100ms, we are solving the complex challenges of scale, latency, and quality across the protocol stack to deliver the gold standard in the video at a cost to our customers. Our SDKs built on the core of WebRTC successfully overcomes scalability issues by using the SFU routing of media as opposed to regular Peer 2 Peer architecture.

Furthermore, with a robust SFU scaling solution and a hybrid model of live streaming integrated with WebRTC, 100ms can support high concurrent volumes ranging from 50000 concurrent conferences (5-100 listeners) to about 5 simultaneous super bowl events ( > 10k listeners).

As the world of video constantly expands, video protocols also need to adapt and support the needs of the future. The last protocol standing will be the one that is the most flexible yet stands on a strong foundation that promises consistent and optimal levels of operability.

At 100ms, this is our exact goal. By tapping into the strengths of the best of what video protocols have to offer, we are building a world-class solution that combines functionality, ease of adoption and affordability.

Basics

Share

Related articles

See all articles