Home

/ Blog /

Transcripts and AI-generated summaries, now live in BetaTranscripts and AI-generated summaries, now live in Beta

July 18, 20234 min read

Share

We're thrilled to announce exciting new features on our platform - Speaker Labelled Transcription and AI-generated Summary.

Recordings of live video interactions serve as valuable assets enabling on-demand content, quality enhancement, training, feedback, and audit purposes. However, the time-consuming nature of viewing recordings poses a significant challenge. Transcriptions and summaries help you extract answers and insights faster from recordings. With our new transcription and summary capabilities, we not only convert the human analog form of information into actionable data points, allowing for faster analysis and a comprehensive bird’s eye view, but we also enable analysis and searchability of the information.

Moreover, we understand the hassle of working with multiple services and integrating various plugins. To streamline this process, we have integrated these capabilities into our recording APIs and developed a user-friendly dashboard UI for a quick implementation.

If you’ve been a user of 100ms recordings, these new features will fit neatly into your consumption workflow.

Transcripts with Speaker Labels

While many services can transcribe videos, understanding who spoke when is crucial for gaining comprehensive context. Speaker labels significantly enhance the quality of information extracted from transcriptions, enabling topical analysis, interests, speaking patterns across all of user’s calls. Here’s what 100ms’ transcription does well:

- Speaker Labelled: By utilising the underlying audio track of each peer, we accurately identify speakers and map their spoken words. In contrast, most other transcription services use audio characteristics to do speech segmentation and extract embeddings to identify speakers. But this approach is susceptible to issues with factors like number of speakers, audio quality, and use of short and filler phrases. Also, even after speaker attribution, it is not possible to identify the exact speaker’s name or user ID in any way.

- Support for Custom Vocabulary: This enables you to add non-dictionary words like names, slang, abbreviations, and more that the AI model may not recognise.

- Multiple File Formats: You have various file formats to choose from:

- Text file (.txt) for direct consumption.

- Subtitle file (.srt) which allows for synchronised playback.

- Granular Structured (.json) file which associates peer names with peer IDs, provides word and sentence-level granularity with timestamps, making this extremely versatile. It enables mapping all of user's calls, identifying behavioural patterns, conducting sentimental analysis, and gauging live engagement levels for actionable insights.

- Generated Post Call Completion: Room Composite Recordings currently serve as a prerequisite for accessing transcription and summary features, but we plan to expand this in future releases.

Call Summaries Generated by AI

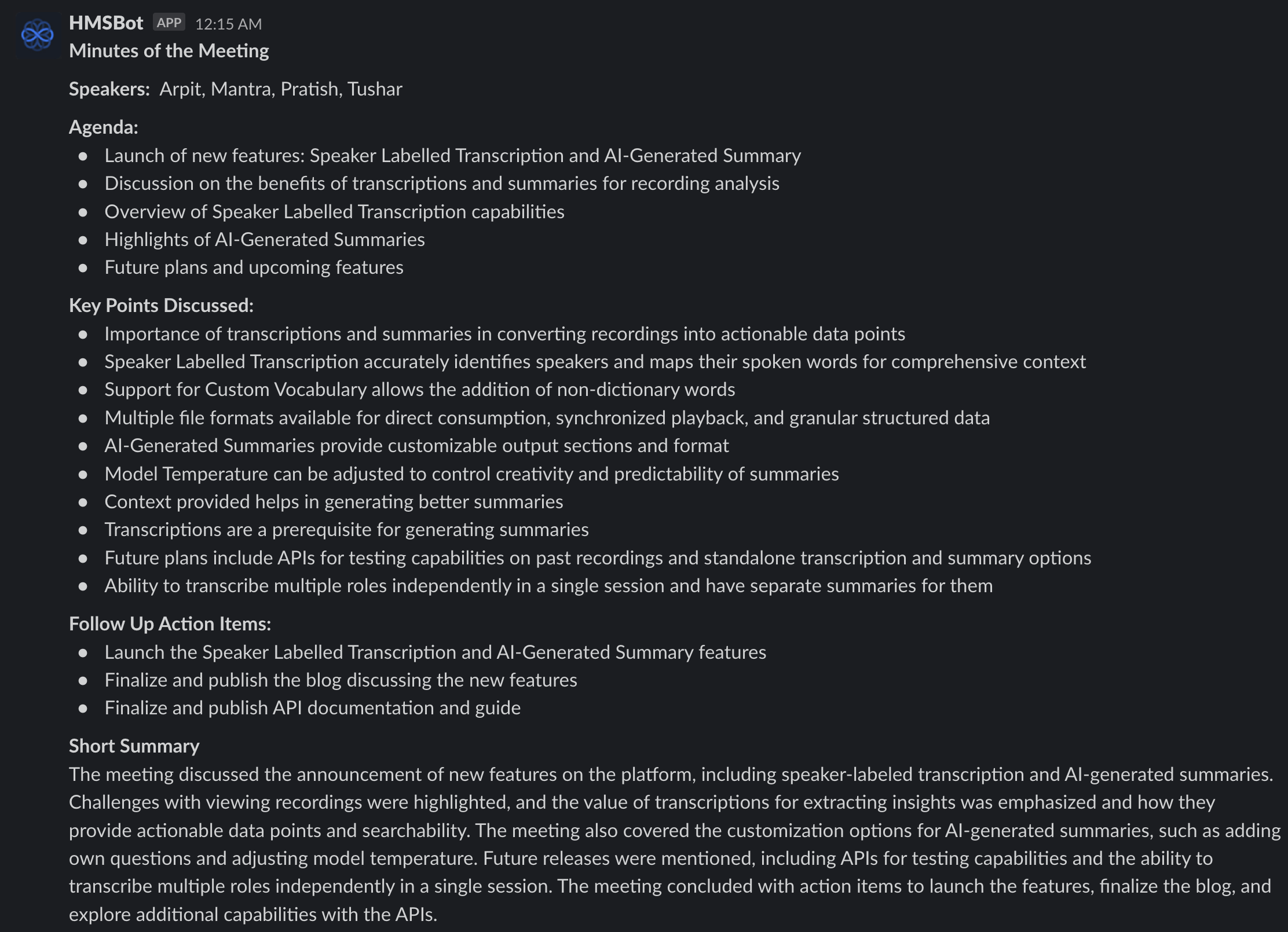

Initially skeptical of the value AI summaries could provide, we now heavily rely on them for design discussions and customer interactions. The following summary is generated from the transcript of the team's discussion on this blog's initial draft.

We’ve built our first summary feature release to not only summarise the calls in the Minutes of the Meeting format but we’ve also kept it customisable. Here’s what is possible:

- Customisable Output: You can,

- Choose from our pre-defined default Output Sections; Agenda, Key Points Covered, Follow-up Action Items, and Short Summary.

- Add your own questions in the Output Sections. This is very helpful in tracking specific inquiries like how many times did John ask “Can we this ship faster?”.

- Choose between bullets or paragraph as Output Format for the different sections.

- You can increase the Model Temperature to make the AI be more creative or decrease to make it more deterministic and predictable. Higher creativity yields deeper insights, but once in a while, the AI will hallucinate and add imaginary fragments to the summary.

- Provide Context: Treat AI as a really smart colleague who has just joined a conversation. Help set context about the conversation and share expectations about the discussion points to output a better summary.

- Generating the summary requires a transcription as a prerequisite, and the current output format is a JSON file.

What’s in store for the future?

This is only the beginning for this feature. We have much planned in our future releases. Here’s a sneak peak:

- Separate transcription and summary APIs which will allow you to transcribe and summarise past recordings.

- Standalone transcription and summary without the need for recording.

- Ability to transcribe multiple roles independently in a single session and have separate summaries for them.

Enable transcription for your recordings now

We’ve kept this feature completely free for now since this is in Beta. We’re looking to gather as much feedback as we can and can’t wait for you to try it out.

We’ve kept it super simple to get started with transcription and summary and written this neat little guide to help you with that.

Product & news

Related articles

See all articles